Picture this scenario: your website rankings have taken a sudden and significant dive. Your position may have fallen by several spots or even disappeared entirely from the top 100. You feel nervous and start to panic, but don’t worry; this is a typical occurrence in the world of SEO. The critical thing is to stay calm and have a checklist to determine the problem’s root cause.

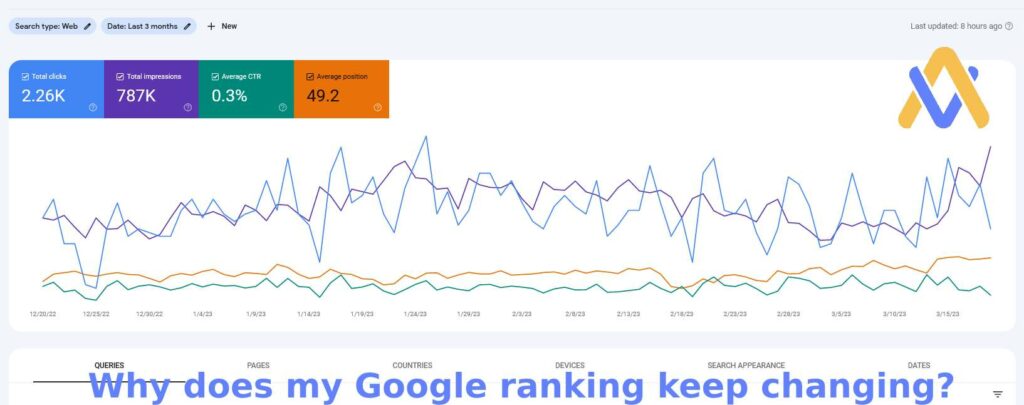

The first step is always to verify if your ranking tracking tool is accurate, as it may have technical issues. You can also check your website’s analytics suite and Google Search Console to confirm if there has been a genuine drop in rankings and organic traffic.

If you still see signs of a ranking drop, you need to assess the situation’s impact by listing all the search queries affected by the drop. It would help if you noted what cluster the query belonged to, its old and new rankings, the URL and content type that was ranking, and whether the page was indexable. This process will help you see the patterns and identify the sections of your website that are most impacted. You can use a template to facilitate this process.

One of the common reasons for ranking drops is significant changes made to a website, such as a redesign or content overhaul. Therefore, asking your team and agency if they have made any high-impact changes is crucial. It’s also essential to check project management software and code repositories for related activities. Remember not to panic and check your change logs before attributing the drop to algorithm updates or penalties.

If your website rankings are essential to your business, you should monitor it for on-page SEO changes using a monitoring tool that distinguishes between technical and content changes. Technological changes may affect the entire website, while content changes often only affect specific pages. By following these steps, you can quickly diagnose and address the underlying issue causing the ranking drop.

Ensure that your website is still being crawled and appropriately indexed by search engines after a sudden drop in rankings.

To do this, conduct a website crawl and check for any changes in the following technical areas:

- HTTP status codes: Verify that your pages are still returning HTTP status 200 and that redirects are still in place.

- Canonical URL: Check if there are any changes to your canonical URLs.

- Meta robots tag: Ensure that search engines are not instructed not to index your important pages by the meta robots tag.

- Robots.txt: Check if your robots.txt file has been modified, and confirm that search engines can still access all sections of your website that need to be indexed.

- Hreflang: Verify that your hreflang tags are still set up correctly.

Modifications to Internal Linking Structure

Modifications to Internal Linking Structure

Changes made to a website’s internal linking structure can considerably affect your pages’ SEO performance. The impact can be significant if changes have been made to links on the homepage, authoritative pages, sidebar, or footer. It is particularly common to see substantial shifts in the internal link structure after a website redesign, so watching for such changes is important.

- Headings: Search engines also use headings to determine relevancy, so changes to headings can impact your rankings. Check if they’ve been altered.

- Meta Description: While the meta description doesn’t affect your rankings directly, it can affect the click-through rate (CTR) for your search result, which can impact your rankings. Check for changes to your meta descriptions.

- Updates in Content: Search engines rely on content to assess relevance for search queries, meaning that changes to content can significantly impact your rankings. Pages removed from the website can have an even more substantial impact. To check for content changes, crawl your website and pay attention to the following: Even minor changes to the title tag can significantly impact your rankings. Check for any modifications.

- Technical challenges: If your website is experiencing technical challenges, such as crawl errors, this can significantly impact your search rankings. Crawl errors can occur when search engines encounter issues while accessing and indexing your site’s content. If these issues persist, they can hinder the ability of search engines to rank your content effectively.

Let’s discuss the possibility of crawl anomalies causing a drop in your Google rankings. This common issue occurs when Google cannot correctly access and index your website.

You can start by checking Google Search Console’s Index Coverage report to identify crawl anomalies. This report will indicate any crawl errors, warnings, or excluded URLs that Google has encountered while indexing your website.

Additionally, analyzing your website’s log files can provide valuable insights into crawl activity from search engine bots and visitors. By focusing on the URLs that were affected in step 3, you can check for any decrease in crawl activity from Google or an increase in 4xx and 5xx status codes.

Addressing crawl anomalies as soon as possible is important to prevent further negative impacts on your rankings. Occasionally, crawl anomalies can be caused by technical issues such as server errors, broken links, or incorrect robots.txt settings. By identifying and fixing these issues, you can ensure that Google can properly crawl and index your website.

Body Content:

Check for alterations. Google heavily relies on the body content of a page to determine its relevance to queries. If changes have been made to titles, meta descriptions, or headings, body content has also been changed. WordPress automatically tracks these changes, or you can use a “cache:” query or the Way Back Machine (which opens in a new tab) to view Google’s cached version of your page.

Similar to tracking technical changes, keeping track of content changes is a time-consuming and error-prone task.

Check for server issues .

It’s crucial to ensure that your website runs smoothly and without technical problems. Server issues such as slow response times or frequent downtime can cause a drop in rankings. Here are some things to check:

- Server response time: Check your server response time to ensure it’s fast enough. You can use tools like GTmetrix or Pingdom to check this.

- Downtime: Check to see if your website is experiencing any downtime. Use a monitoring tool like UptimeRobot to keep track of uptime and get alerts if there are any issues.

- Hosting: If you’re on shared hosting, there’s a possibility that other websites on the same server are consuming too many resources, which can impact your website’s performance. Consider upgrading to a dedicated server or a VPS to avoid this issue.

It’s important to address any server issues promptly to avoid further negative impacts on your rankings.

A.I. is our Artificial Intelligence Bot that offers the best of all search marketing and paid advertising strategies here are AI Stratagems.